Abstract

When we interact with others, the context in which our actions take place plays a major role in our behavior. This means that our understanding of objects, words, emotions, and social cues may differ depending on where we encounter them. Here, we explain how context affects daily mental processes, ranging from how people see things to how they behave with others. Then, we present the social context network model. This model explains how people process contextual cues when they interact, through the activity of the frontal, temporal, and insular brain regions. Next, we show that when those brain areas are affected by some diseases, patients find it hard to process contextual cues. Finally, we describe new ways to explore social behavior through brain recordings in daily situations.

Introduction

Everything you do is influenced by the situation in which you do it. The situation that surrounds an action is called its context. In fact, analyzing context is crucial for social interaction and even, in some cases, for survival. Imagine you see a man in fear: your reaction depends on his facial expression (e.g., raised eyebrows, wide-open eyes) and also on the context of the situation. The context can be external (is there something frightening around?) or internal (am I calm or am I also scared?). Such contextual cues are crucial to your understanding of any situation.

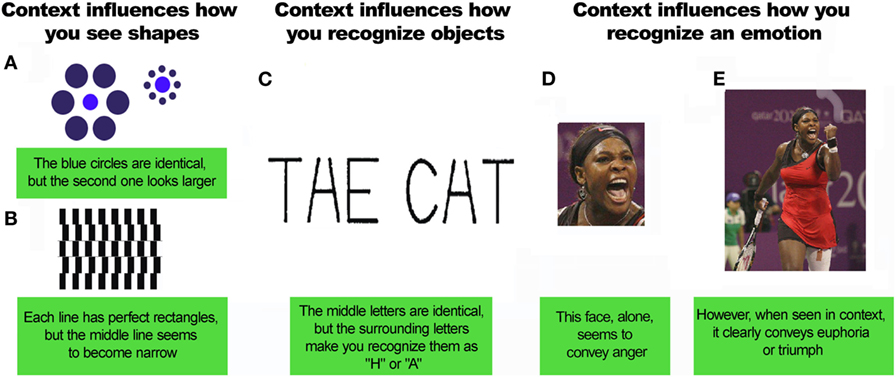

Context shapes all processes in your brain, from visual perception to social interactions [1]. Your mind is never isolated from the world around you. The specific meaning of an object, word, emotion, or social event depends on context (Figure 1). Context may be evident or subtle, real or imagined, conscious or unconscious. Simple optical illusions demonstrate the importance of context (Figures 1A,B). In the Ebbinghaus illusion (Figure 1A), rings of circles surround two central circles. The central circles are the same size, but one appears to be smaller than the other. This is so because the surrounding circles provide a context. This context affects your perception of the size of the central circles. Quite interesting, right? Likewise, in the Cafe Wall Illusion (Figure 1B), context affects your perception of the lines’ orientation. The lines are parallel, but you see them as convergent or divergent. You can try focusing on the middle line of the figure and check it with a ruler. Contextual cues also help you recognize objects in a scene [2]. For instance, it can be easier to recognize letters when they are in the context of a word. Thus, you can see the same array of lines as either an H or an A (Figure 1C). Certainly, you did not read that phrase as “TAE CHT”, correct? Lastly, contextual cues are also important for social interaction. For instance, visual scenes, voices, bodies, other faces, and words shape how you perceive emotions in a face [3]. If you see Figure 1D in isolation, the woman may look furious. But look again, this time at Figure 1E. Here you see an ecstatic Serena Williams after she secured the top tennis ranking. This shows that recognizing emotions depends on additional information that is not present in the face itself.

- Figure 1 - Contextual affects how you see things.

- A,B. The visual context affects how you see shapes. C. Context also plays an important role in object recognition. Context-related objects are easier to recognize. “THE CAT” is a good example of contextual effects in letter recognition (reproduced with permission from Chun [2]). D,E. Context also affects how you recognize an emotion [by Hanson K. Joseph (Own work), CC BY-SA 4.0 (http://creativecommons.org/licenses/by-sa/4.0), via Wikimedia Commons].

Contextual cues also help you make sense of other situations. What is appropriate in one place may not be appropriate in another. Making jokes is OK when studying with your friends, but not OK during the actual exam. Also, context affects how you feel when you see something happening to another person. Picture someone being beaten on the street. If the person being beaten is your best friend, would you react in the same way as if he were a stranger? The reason why you probably answered “no” is that your empathy may be influenced by context. Context will determine whether you jump in to help or run away in fear. In sum, social situations are shaped by contextual factors that affect how you feel and act.

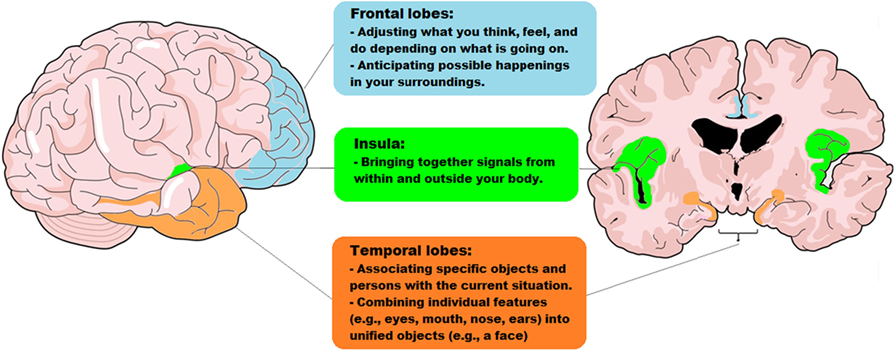

Contextual cues are important for interpreting social situations. Yet, they have been largely ignored in the world of science. To fill this gap, our group proposed the social context network model [1]. This model describes a brain network that integrates contextual information during social processes. This brain network combines the activity of several different areas of the brain, namely frontal, temporal, and insular brain areas (Figure 2). It is true that many other brain areas are involved in processing contextual information. For instance, the context of an object that you can see affects processes in the vision areas of your brain [4]. However, the network proposed by our model includes the main areas involved in social context processing. Even contextual visual recognition involves activity of temporal and frontal regions included in our model [5].

- Figure 2 - The parts of the brain that work together, in the social context network model.

- This model proposes that social contextual cues are processed by a network of specific brain regions. This network is made up of frontal (light blue), temporal (orange), and insular (green) brain regions and the connections between these regions.

How Does Your Brain Process Contextual Cues in Social Scenarios?

To interpret context in social settings, your brain relies on a network of brain regions, including the frontal, temporal, and insular regions. Figure 2 shows the frontal regions in light blue. These regions help you update contextual information when you focus on something (say, the traffic light as you are walking down the street). That information helps you anticipate what might happen next, based on your previous experiences. If there is a change in what you are seeing (as you keep walking down the street, a mean-looking Doberman appears), the frontal regions will activate and update predictions (“this may be dangerous!”). These predictions will be influenced by the context (“oh, the dog is on a leash”) and your previous experience (“yeah, but once I was attacked by a dog and it was very bad!”). If a person’s frontal regions are damaged, he/she will find it difficult to recognize the influence of context. Thus, the Doberman may not be perceived as a threat, even if this person has been attacked by other dogs before! The main role of the frontal regions is to predict the meaning of actions by analyzing the contextual events that surround the actions.

Figure 2 shows the insular regions, also called the insula, in green. The insula combines signals from within and outside your body. The insula receives signals about what is going on in your guts, heart, and lungs. It also supports your ability to experience emotions. Even the butterflies you sometimes feel in your stomach depend on brain activity! This information is combined with contextual cues from outside your body. So, when you see that the Doberman breaks loose from its owner, you can perceive that your heart begins to beat faster (an internal body signal). Then, your brain combines the external contextual cues (“the Doberman is loose!”) with your body signals, leading you to feel fear. Patients with damage to their insular regions are not so good at tracking their inner body signals and combining them with their emotions. The insula is critical for giving emotional value to an event.

Lastly, Figure 2 shows the temporal regions marked with orange. The temporal regions associate the object or person you are focusing on with the context. Memory plays a major role here. For instance, when the Doberman breaks loose, you look at his owner and realize that it is the kind man you met last week at the pet shop. Also, the temporal regions link contextual information with information from the frontal and insular regions. This system supports your knowledge that Dobermans can attack people, prompting you to seek protection.

To summarize, combining what you experience with the social context relies on a brain network that includes the frontal, insular, and temporal regions. Thanks to this network, we can interpret all sorts of social events. The frontal areas adjust and update what you think, feel, and do depending on present and past happenings. These areas also predict possible events in your surroundings. The insula combines signals from within and outside your body to produce a specific feeling. The temporal regions associate objects and persons with the current situation. So, all the parts of the social context network model work together to combine contextual information when you are in social settings.

When Context Cannot be Processed

Our model helps to explain findings from patients with brain damage. These patients have difficulties processing contextual cues. For instance, people with autism find it hard to make eye contact and interact with others. They may show repetitive behaviors (e.g., constantly lining up toy cars) or excessive interest in a topic. They may also behave inappropriately and have trouble adjusting to school, home, or work. People with autism may fail to recognize emotions in others’ faces. Their empathy may also be reduced. One of our studies [6] showed that these problems are linked to a decreased ability to process contextual information. Persons with autism and healthy subjects performed tasks involving different social skills. Autistic people did poorly in tasks that relied on contextual cues—for instance, detecting a person’s emotion based on his gestures or voice tone. But, autistic people did well in tasks that didn’t require analyzing context, for example tasks that could be completed by following very general rules (for example, “never touch a stranger on the street”). Thus, the social problems that we often see in autistic people might result from difficulty in processing contextual cues.

Another disease that may result from problems processing contextual information is called behavioral variant frontotemporal dementia. Patients with this disease exhibit changes in personality and in the way they interact with others, after about age 60. They may do improper things in public. Like people with autism, they may not show empathy or may not recognize emotions easily. Also, they find it hard to deal with the details of context needed to understand social events. All these changes may reflect general problems processing social context information. These problems may be caused by damage to the brain network described above.

Our model can also explain patients with damage to the frontal lobes or those who have conditions such as schizophrenia or bipolar disorder [7]. Schizophrenia is a mental disorder characterized by atypical social cognition and inability to distinguish between real and imagined world (as in the case of hallucinations). Similar but milder problems appear in patients with bipolar disorder, which is another psychiatric condition mainly characterized by oscillating periods of depression and periods of elevated mood (called hypomania or mania).

In sum, the problems with social behavior seen in many diseases are probably linked to poor context processing after damage to certain brain areas, as proposed by our model (Figure 2). Future research should explore how correct this model is, adding more data about the processes and regions it describes.

New Techniques to Assess Social Behavior and Contextual Processing

The results mentioned above are important for scientists and doctors. However, they have a great limitation. They do not reflect how people behave in daily life! Most of the research findings came from tasks in a laboratory, in which a person responded to pictures or videos. These tasks do not really represent how we act every day in our lives. Social life is much more complicated than sitting at a desk and pressing buttons when you see images on a computer, right? Research based on such tasks doesn’t reflect real social situations. In daily life, people interact in contexts that constantly change.

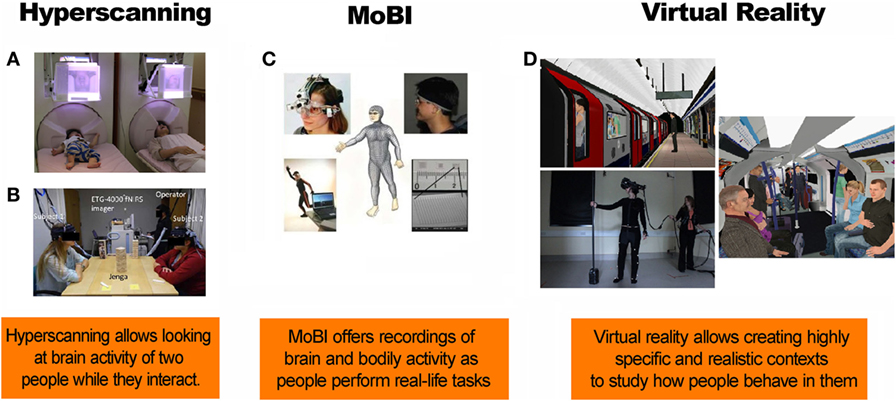

Fortunately, new methods allow scientists to assess real-life interactions. Hyperscanning is one of these methods. Hyperscanning allows measurement of the brain activity of two or more people while they perform activities together. For example, each subject can lie inside a separate scanner (a large tube containing powerful magnets). This scanner can detect changes in blood flow in the brain while the two people interact. This approach is used, for example, to study the brains of a mother and her child while they are looking at each other’s faces (Figure 3A).

- Figure 3 - New techniques to study processing of contextual cues.

- A. A mother and her infant look at each others’ facial expression while their brain activity is recorded (reproduced with permission from Masayuki et al. [8]). B. Hyperscanning of people interacting with each other during a game of Jenga (reproduced with permission from Liu et al. [9]). C. A new method of studying brain activity, called mobile brain/body imaging (MoBI) (reproduced with permission from Makeig et al. [10]). D. Virtual reality simulations of a virtual train at the station and a virtual train carriage (reproduced with permission from Freeman et al. [11]).

Hyperscanning can also be done using electroencephalogram equipment. Electroencephalography measures the electrical activity of the brain. Special sensors called electrodes are attached to the head. They are hooked by wires to a computer which records the brain’s electrical activity. Figure 3B shows an example of the use of electroencephalogram hyperscanning. This method has been used to measure the brain activity in two individuals while they are playing Jenga. Future research should apply this technique to study the processing of social contextual cues.

One limitation of hyperscanning is that it typically requires participants to remain still. However, real-life interactions involve many bodily actions. Fortunately, a new method called mobile brain/body imaging (MoBI, Figure 3C) allows the measurement of brain activity and bodily actions while people interact in natural settings.

Another interesting approach is to use virtual reality. This technique involves fake situations. However, it puts people in different situations that require social interaction. This is closer to real life than the tasks used in most laboratories. As an example, consider Figure 3D. This shows a virtual reality experiment in which participants traveled through an underground tube station in London. Our understanding of the way context impacts social behavior could be expanded in future virtual reality studies.

In sum, future research should use new methods for measuring real-life interactions. This type of research could be very important for doctors to understand what happens to the processing of social context cues in various brain injuries or diseases. These realistic tasks are more sensitive than most of the laboratory tasks that are usually used for the assessment of patients with brain disorders.

Glossary

Empathy: ↑ The ability to feel what another person is feeling, that is, to “place yourself in that person’s shoes.”

Autism: ↑ A general term for a group of complex disorders of brain development. These disorders are characterized by repetitive behaviors, as well as different levels of difficulty with social interaction and both verbal and non-verbal communications.

Behavioral Variant Frontotemporal Dementia: ↑ A brain disease characterized by progressive changes in personality and loss of empathy. Patients experience difficulty in regulating their behavior, and this often results in socially inappropriate actions. Patients typically start to show symptoms around age 60.

Hyperscanning: ↑ A novel technique to measure brain activity simultaneously from two people.

Virtual Reality: ↑ Computer technologies that use software to generate realistic images, sounds, and other sensations that replicate a real environment. This technique uses specialized display screens or projectors to simulate the user’s physical presence in this environment, enabling him or her to interact with the virtual space and any objects depicted there.

Conflict of Interest Statement

The authors declare that the research was conducted in the absence of any commercial or financial relationships that could be construed as a potential conflict of interest. The authors declare no competing financial interests.

Acknowledgements

This study was supported by grants from CONICYT/FONDECYT Regular (1170010), FONDAP 15150012, and the INECO Foundation.

References

[1] ↑ Ibanez, A., and Manes, F. 2012. Contextual social cognition and the behavioral variant of frontotemporal dementia. Neurology 78(17):1354–62. doi:10.1212/WNL.0b013e3182518375

[2] ↑ Chun, M. M. 2000. Contextual cueing of visual attention. Trends Cogn. Sci. 4(5):170–8. doi:10.1016/S1364-6613(00)01476-5

[3] ↑ Barrett, L. F., Mesquita, B., and Gendron, M. 2011. Context in emotion perception. Curr. Direct Psychol. Sci. 20(5):286–90. doi:10.1177/0963721411422522

[4] ↑ Beck, D. M., and Kastner, S. 2005. Stimulus context modulates competition in human extrastriate cortex. Nat. Neurosci. 8(8):1110–6. doi:10.1038/nn1501

[5] ↑ Bar, M. 2004. Visual objects in context. Nat. Rev. Neurosci. 5(8):617–29. doi:10.1038/nrn1476

[6] ↑ Baez, S., and Ibanez, A. 2014. The effects of context processing on social cognition impairments in adults with Asperger’s syndrome. Front. Neurosci. 8:270. doi:10.3389/fnins.2014.00270

[7] ↑ Baez, S, Garcia, A. M., and Ibanez, A. 2016. The Social Context Network Model in psychiatric and neurological diseases. Curr. Top. Behav. Neurosci. 30:379–96. doi:10.1007/7854_2016_443

[8] ↑ Masayuki, H., Takashi, I., Mitsuru, K., Tomoya, K., Hirotoshi, H., Yuko, Y., and Minoru, A. 2014. Hyperscanning MEG for understanding mother-child cerebral interactions. Front Hum Neurosci 8:118. doi:10.3389/fnhum.2014.00118

[9] ↑ Liu, N., Mok, C., Witt, E. E., Pradhan, A. H., Chen, J. E., and Reiss, A. L. 2016. NIRS-based hyperscanning reveals inter-brain neural synchronization during cooperative Jenga game with face-to-face communication. Front Hum Neurosci 10:82. doi:10.3389/fnhum.2016.00082

[10] ↑ Makeig, S., Gramann, K., Jung, T.-P., Sejnowski, T. J., and Poizner, H. 2009. Linking brain, mind and behavior: The promise of mobile brain/body imaging (MoBI). Int J Psychophys 73:985–1000

[11] ↑ Evans, N., Lister, R., Antley, A., Dunn, G., and Slater, M. 2014. Height, social comparison, and paranoia: An immersive virtual reality experimental study. Psych Res 218(3):348–52. doi:10.1016/j.psychres.2013.12.014